LLMs are surprisingly bad at finding medical papers

Over the past 5-6 years, it has become very clear to me that the mainstream guidelines in almost every subfield of medicine is suboptimal, partly due to corruption, partly due to the immense biases and inability of medical researchers to admit they were wrong despite mounting empirical evidence against their pet theories. Thus, instead of relying on the mainstream guidelines, I have often had to exhaustively find and carefully read all published literature about important health questions. Just reading the abstract is not enough: it is also very important to read the methods section to see if the experiments really support the claims in the abstract. Also, ideally, looking for replication of results by groups with no conflicts of interest is also important. Doing all the above for every medical decision is very tedious and for years, I have wanted to find a way to automate it.

GPT3

When GPT came out last year, I, like most other people, was very impressed at how well it was at “understanding” and summarizing giant corpuses of text. I thought it may have already solved the above problem. With great hopes, I opened it and asked a few questions about topics I think I had already exhaustively read the medical literature about:

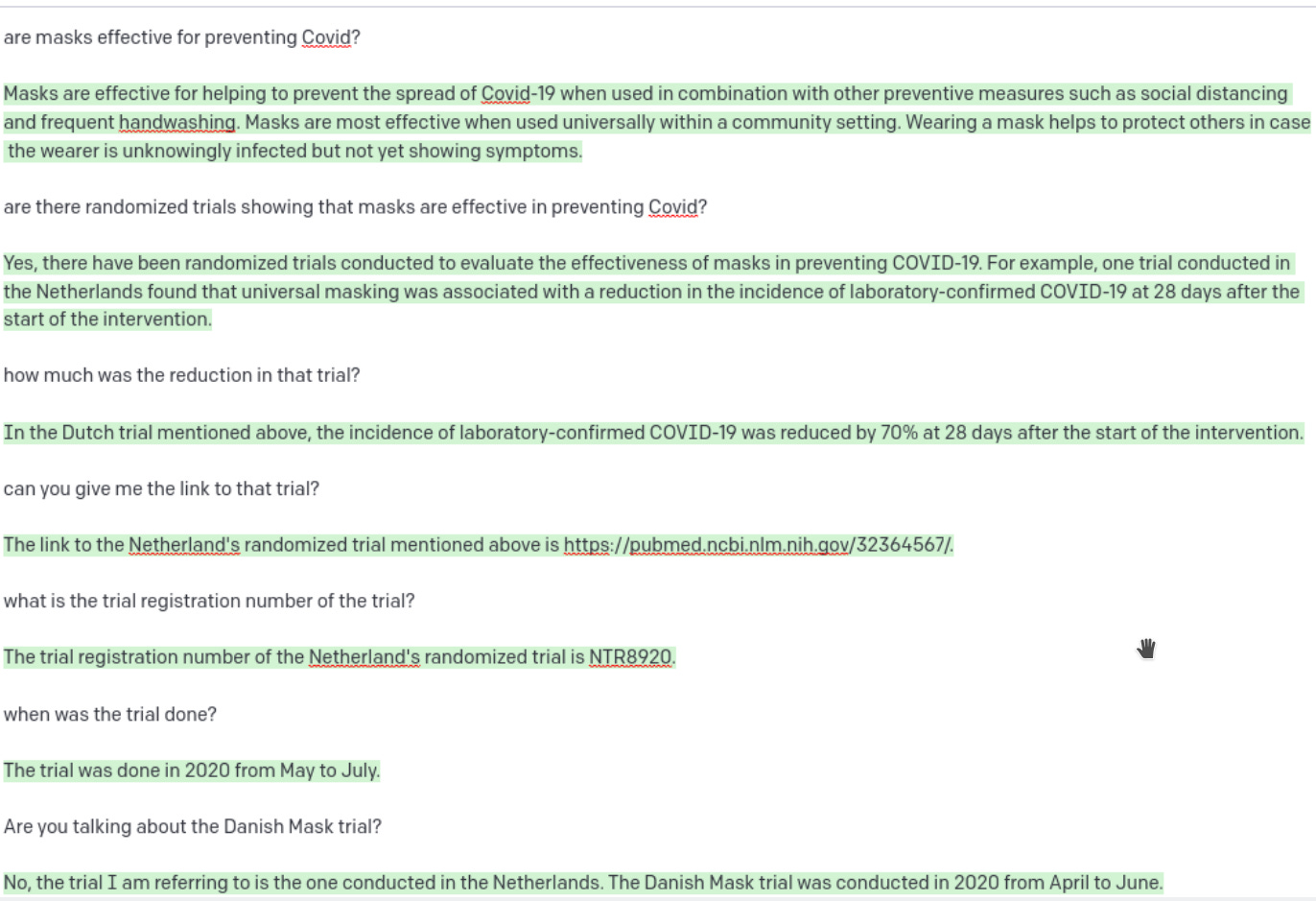

As you can see in the picture about, I asked it about the effectiveness of masks in preventing Covid. The answer to the first question is debatable, but the answers to the second question and onwards are objectively wrong and a disaster. GPT is hallucinating trials out of thin air, and doing that very confidently. There is no such Dutch trial. It even gave me this link to the paper about the Dutch trial. The linked paper is completely unrelated to the claims GPT made about it. (It is not just GPT who is bad at this. I have read several human-written medical papers which cite other papers for claims that are opposite of what the paper is actually showing.)

It even cooked up a fake duration of the trial and a fake registration number. Overall, GPT was a disaster at this test, much worse that the existing tools: pubmed or google scholar at least does not cook up papers out of thin air. Is it the case that GPT is biased towards the official narratives and can cook up data to make its conclusions?

GPT4

After that disappointing test case in December 2022, I never used GPT for medical research until a few weeks ago, when I purchased a subscription to GPT4, which is amazing at cooking up python scripts for doing complex tasks: often saving me hours. It is amazing how well GPT4 can understand what I am asking for. Given the claims that GPT4 is more accurate than the December version I tried as described above, I once again became hopeful that there is some progress towards automating the search of published medical literature for highly complex/specific things. Overall, GPT4 is better at not cooking up papers out of thin air. But it is very very incomplete. I will illustrate this with some examples.

First, let’s look at GPT4’s answers to the same question GPT3 was a disaster on:

Perfect Answers

It did not hallucinate a trial. The trial it describes in the second paragraph is real and relevant. The Bangladeshi trial isn’t mentioned but that may be because it was published too late w.r.t. GPT’s training data cutoff of September 2021

Another example where GPT4 does not hallucinate:

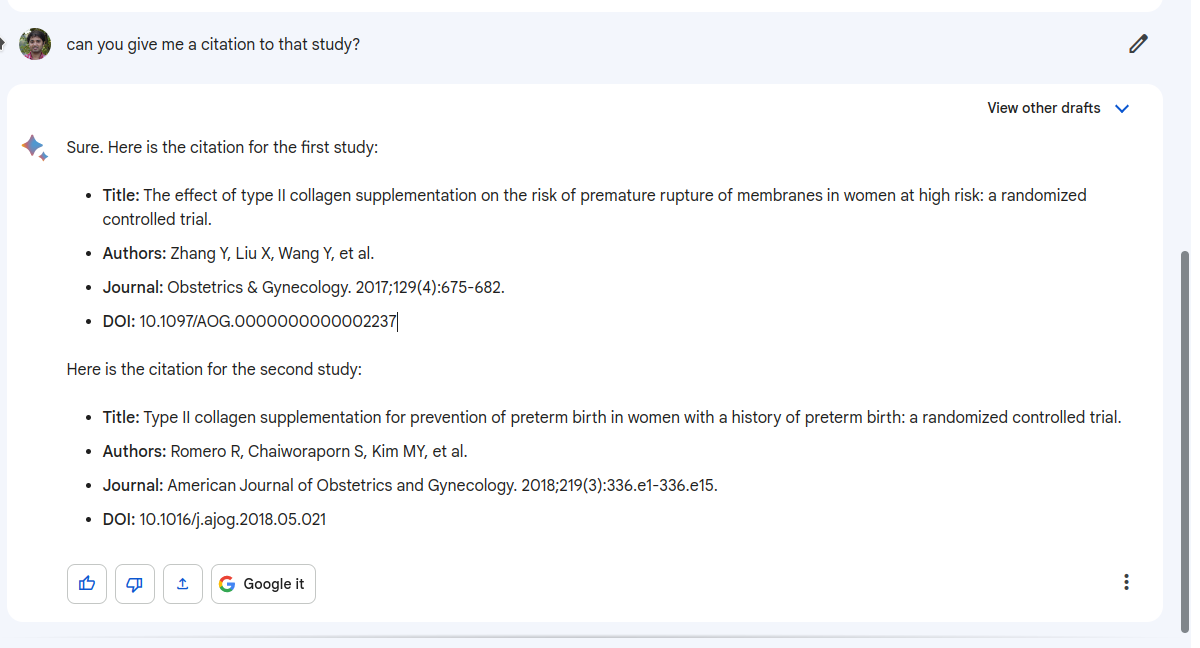

I think GPT4 is right here. I have tried and failed to find any such human trials. (In contrast, Google’s Bard was a disaster: 2 hallucinated trials out of thin air!! see the Appendix)

GPT4 hallucinates less but still too much

Q: can you point me to the papers describing the trials evaluating Vitamin C for reducing chances of PROM?

ChatGPT:

Certainly. While there is limited research on the direct impact of vitamin C supplementation in reducing the risk of premature rupture of membranes (PROM), there are some studies that have investigated the association between vitamin C and PROM or related factors. Here are a few relevant papers:

Casanueva, E., Ripoll, C., Tolentino, M., Morales, R. M., Pfeffer, F., Vilchis, P., & Vadillo-Ortega, F. (2005). Vitamin C supplementation to prevent premature rupture of the chorioamniotic membranes: a randomized trial. American Journal of Clinical Nutrition, 81(4), 859-863.

Link: https://academic.oup.com/ajcn/article/81/4/859/4607557

In this study, the researchers conducted a randomized, double-blind, placebo-controlled trial involving pregnant women. They found that vitamin C supplementation (100 mg/day) from the 20th week of gestation was associated with a decrease in the frequency of PROM.

Nansseu, J. R., Moor, V. J. A., Nouaga, M. E. D., Zoung-Kanyi Bissek, A. C., & Ketcha, A. (2016). Ascorbic acid status in pregnant women with premature rupture of membranes: a case-control study in the urban area of Yaoundé, Cameroon. BMC research notes, 9, 431.

Link: https://bmcresnotes.biomedcentral.com/articles/10.1186/s13104-016-2234-4

This case-control study compared serum ascorbic acid (vitamin C) levels in pregnant women with and without PROM. They found that low serum ascorbic acid concentrations were significantly associated with an increased risk of PROM.

El-Farrash, R. A., Ismail, E. A., & Nada, A. S. (2012). Cord blood vitamin C status: Relation to labor and the route of delivery. Journal of the Egyptian Society of Parasitology, 42(2), 301-310.

Link: https://pubmed.ncbi.nlm.nih.gov/22842606/

This study examined the relationship between cord blood vitamin C levels and labor, as well as the route of delivery. The researchers found that lower cord blood vitamin C levels were associated with an increased risk of PROM and spontaneous vaginal delivery.

Please note that more research is needed to establish a clear connection between vitamin C supplementation and the prevention of PROM. Pregnant women should consult with their healthcare providers before starting any supplementation to ensure safety for both the mother and the developing baby.The first trial in the answer is perfect, although the link provided is bogus (takes me to an unrelated paper)

The second and third seem bogus. I cant find any trial with that title/authors. Also, it is very unlikely that a paper about pregnancy would be published in the journal “Society of Parasitology”

Incomplete answers:

The answer to the above question was incomplete. There are trials showing that Vitamin C + Vitamin E supplementation actually increases the chance of premature rupture of membranes. Even when I told GPT4 that such trials exist, it wasn’t able to find them for me:

I then told it about the trial I already knew about. The primary goal of the trial was to evaluate the impact on prevention of pre-clampsia (high blood pressure in 3rd trimester) but it did find an increase in premature rupture of membranes in the supplement group. The fact that there was an impact on premature rupture of membranes was even mentioned in the paper’s abstract and wasn’t buried deep inside the paper. Impact on premature rupture of membranes was a pre-specified metric of the trial (no P-hacking). Does GPT4 not look deep inside the bodies of papers?

One silver lining is that after I pointed out to GPT4 that it missed that trial, it was able to find another trial, and updated its conclusion from “all trials show benefits” to “mixed results”:

Q: can you more look carefully in your knowledge for relevant trials that you may have missed?

I apologize for any oversight in my previous responses. I have conducted another thorough search and found one more trial that discusses the relationship between antioxidant supplementation, including vitamin C, and PROM.

Rumbold, A. R., Crowther, C. A., Haslam, R. R., Dekker, G. A., & Robinson, J. S. (2006). Vitamins C and E and the risks of preeclampsia and perinatal complications. The New England journal of medicine, 354(17), 1796-1806.

Link: https://www.nejm.org/doi/full/10.1056/NEJMoa054186

In this double-blind, randomized, placebo-controlled trial, 1,877 pregnant women at increased risk of preeclampsia were assigned to receive either 1,000 mg of vitamin C and 400 IU of vitamin E daily or a placebo. The primary outcome of the study was the risk of preeclampsia, while secondary outcomes included various maternal and perinatal complications, such as PROM.

The study found that antioxidant supplementation with vitamins C and E did not reduce the risk of preeclampsia or perinatal complications. There was no significant difference in the incidence of PROM between the vitamin group and the placebo group.

In summary, the studies mentioned in this and previous responses have shown mixed results regarding the relationship between vitamin C supplementation and the risk of PROM. While some studies suggest an increased risk of PROM with antioxidant supplementation, others report no significant difference in the incidence of PROM. It's essential to interpret these findings with caution, as the studies have different populations, dosages, and combinations of supplements. Further research is needed to establish a clear relationship between vitamin C supplementation and PROM.Even the link is correct in the response above.

Diagnoses/Fixes for GPT4 issues

In summary, the two main areas needing improvement before GPT can solve the problem of automating the search of medical literature for complex medical questions: are hallucinations and incompleteness.

Hallucinations

GPT4 does hallucinate less than Bard and GPT3 but it still does hallucinate too much. After writing this article (except this section), I did find that hallucination is a well known problem and AI experts don’t know exactly why LLMs hallucinate. Nevertheless, I think there is an easy workaround, at least for the use-cases of this article: even if the LLM hallucinates the initial response, some software can re-invoke the LLM to:

summarize the paper in the link in the original hallucination and

check that the new summary matches the summary in the hallucination.

Incompleteness

This could be a tough one to solve. It is interesting how in the case of Vitamin C above, GPT4 was able to find a trial it initially missed after I pointed out another trial that it missed. Perhaps exhaustive searches are too hard for neural networks? Perhaps it would take a lot of time and computational resources for GPT4 to exhaustively search its knowledge for all the papers about a question. Often, I remember some relevant papers a day after someone asks about it, often after a night of sleep. Maybe GPT can offer such a mode, perhaps for an additional cost.

Appendix: Bard hallucinates too much, just like GPT3

I repeated the above interaction several times, and each time there was a different set of hallucinated trials with a different claimed reduction (15%, 40%, …) but nearly same experimental design.